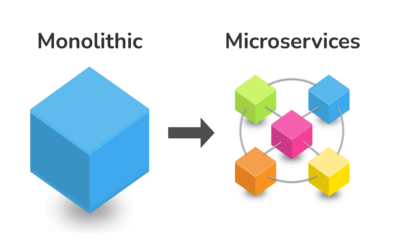

How to provide the right infrastructure to a project that scales well, is robust and secure are not straightforward tasks. Google Cloud has taken significant steps in delivering solutions where the supporting infrastructure is automatically managed. One of the recent proposals is Cloud Run, where not only we take advantage of a docker containerized app but also no management of virtual machines or Kubernates engine is required. This could be a good fit for a wide range of apps since the migration effort is very low and the maintenance task of keeping the infrastructure updated could be significantly reduced

As an example, we tried Cloud Run with one of our dockers deployed images, which was running under a VM machine inside Google Cloud. On that machine we had dockers installed running three containers NGINX, LetsEncrypt, and a Tomcat server.

So first we needed to create a new service. On the Google Cloud console Cloud Run is now one section in the main menu. The first thing you will notice is that if you are already using the Container Registry, the images are automatically populated. Our Tomcat Docker image was already using port 8080, so there was no need to change anything. Then we configured the memory allocated for that app and started the service. The service started very fast and was as responsive as the Cloud VM machine. Moreover, the services are exposed as https, so no additional https configuration was required for this example.

Once the server is deployed, you have some nice tools to review your service. It shows metrics, where you can review the number of request, CPU and memory usage; revisions; and logs. Updating with a new version is straightforward too. Once a new version of the image is uploaded, the same service can be updated. There is no need to create a new service or do any other process, and a nice console will show you the history of your service.

And finally the cost, it seems to scale well too, because it is based on the CPU usage of the services. You will probably miss the full control of your server or your command line tools to access the container itself, but if you want a truly serverless solution for your stateless service, this could be a good choice.

Photo by Goran Ivos on Unsplash

I completely agree with the author’s assessment of Cloud Run. As a business analyst, I’ve seen firsthand how serverless computing can simplify application development and reduce operational overhead. For organizations looking to leverage cloud-agnostic infrastructure, cloud advisory services can help navigate the complexities of migration and deployment. Serverless architectures like Cloud Run offer incredible scalability and cost-effectiveness, making them an attractive choice for stateless services.

I just finished reading this article on Cloud Run by Google and I gotta say it’s spot on! As an IT consultant with some cloud experience (I’m no expert, but I’ve worked with AWS too – that aws consultancy thing is super valuable), I can attest to the simplicity of serverless computing. However, one thing that stood out was how little people know about the ‘management’ side of things… you’re preaching to the choir here! Great post, dude!

I completely agree with your assessment! Cloud Run has been a game-changer for my team’s serverless architecture. The built-in metrics and logging capabilities have saved us so much time in troubleshooting issues. And yes, the cost is scalable and based on actual CPU usage, which has helped us save money. We’ve also been able to seamlessly deploy new versions of our service with minimal downtime using Cloud Run. 🚀

Awesome blog post! 🤩 I totally agree with your experience using Cloud Run by Google. It’s amazing how seamless it is to deploy a Docker image and get it up and running quickly. One thing worth mentioning is that if you’re considering moving to serverless architecture, you should definitely look into cloud advisory services to help you optimize your resources and costs! 👍

I completely agree with the author’s assessment of Cloud Run’s capabilities! As a data scientist who has worked extensively with Google Cloud, I can attest to its simplicity and scalability. In addition to monitoring metrics, revisions, and logs, it’s also worth noting that Cloud Run supports automated rollouts and rollbacks via Kubernetes deployments, further streamlining the development process.

I’m loving this post about Cloud Run 🤩! As someone who’s worked with Google Cloud, I can attest to its simplicity and scalability. One thing worth mentioning is that you can also integrate Cloud Run with other GCP services like Cloud Storage and Pub/Sub for a more seamless experience. Kudos on highlighting the cost-effectiveness of this serverless solution! 🚀

I wholeheartedly concur with the author’s assessment of Cloud Run’s scalability and ease of use. As an advocate for cloud-based infrastructure, I’d like to emphasize that Cloud Run is an exemplary manifestation of serverless computing principles. For organizations seeking to optimize their stateless services, leveraging cloud consulting services can help streamline the migration process. The seamless cost scaling based on CPU usage is indeed a significant advantage.

Come on, folks! I’ve worked with serverless architecture in my day job, and I gotta say, Google’s Cloud Run sounds too good to be true. What about the AWS consultancy side? Don’t we have a whole ecosystem built around serverless on AWS? How does this make sense for large-scale enterprise deployments where scalability and security are paramount? Can someone explain how this would work in real-world scenarios?

I’m not convinced that Cloud Run is truly “serverless” as the author claims. Doesn’t the fact that it’s still based on CPU usage and requires manual uploads of new versions imply some underlying infrastructure management? Shouldn’t a cloud advisory services engagement be recommended to help users navigate these nuances, rather than implying a completely hands-off experience?

I’m a bit confused by your example here 🤔. You mention deploying dockers under a VM machine inside Google Cloud, but isn’t that a contradiction? If I’m already using cloud consulting services like Cloud Run, shouldn’t I be migrating my apps to serverless and ditching the VM altogether? Can you clarify what’s going on here? What are the benefits of running containers under a VM in this scenario?

I appreciate the informative overview of Cloud Run by Google. I was particularly interested in the mention of cost scalability based on CPU usage, as our organization is exploring cloud consulting services to optimize resource allocation. Could you provide more details on how this feature translates into real-world savings for users?